What to Expect From an AEO/GEO Engagement

AEO/GEO engagement deliverables, measurement challenges, proxy KPIs, and 6-12 month outlook. What month-by-month progress looks like.

We see the same shift happening across the Malaysian digital landscape right now. Nearly half of all internet users in Malaysia engaged with ChatGPT in the past month alone. That 48.4 percent adoption rate changes everything about how customers discover your brand.

Our aeo geo engagement expectations focus entirely on capturing this new traffic source. You need to know exactly what a generative engine optimization campaign delivers.

What an AEO/GEO retainer actually ships

We build these campaigns because ranking number one on Google is no longer the sole objective. Over one billion people globally use generative AI tools every month to find direct answers. This massive shift requires a different tactical approach.

Our Premium tier engagement at RM 4,500 per month ships specific assets to capture that audience. Six months into a typical engagement, the client has shipped:

- A complete entity-completeness audit with prioritised gap-fix list

- Wikipedia and Wikidata alignment work where eligible

- Organisation, FAQ, HowTo, and Article schema overhauled and validated

- Authoritative third-party citations earned (digital PR, podcast appearances, industry-publication contributions)

- Prompt-testing baseline established with 30-50 buyer-intent prompts

- Quarterly scorecard documenting brand-mention growth in LLM outputs

Our team provides these deliverables because AI systems require perfectly structured data to confidently cite a brand. What you are paying for is systematic entity and citation work that traditional SEO retainers do not cover.

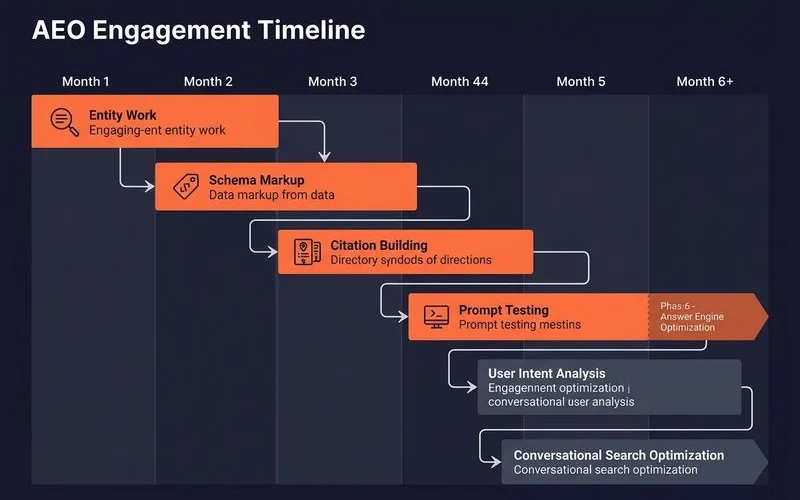

Month-by-month deliverables

We structure the geo engagement timeline into distinct execution phases. Each phase builds the necessary proof and clarity that AI assistants require. A 2026 study by e intelligence shows that AI engines extract content entirely differently than traditional search crawlers.

Month 1: AI visibility audit

We start by defining your baseline visibility across the major AI platforms. ChatGPT processes over 2.5 billion queries per day. You need to know exactly where you stand in that ecosystem.

- Run 30-50 buyer-intent prompts across ChatGPT, Perplexity, Gemini, AI Overviews

- Document where brand is cited, where missing, which competitors are cited instead

- Entity-completeness audit (Wikipedia, Wikidata, schema, third-party mentions)

- Gap-fix prioritisation against competitive set

Month 2-3: Entity and schema fixes

Our focus shifts to technical structuring so AI crawlers can retrieve your data without hallucination. Proper semantic structure is a mandatory requirement for inclusion in AI responses.

- Schema overhaul: Organisation with

knowsAbout,sameAs,hasCredential; FAQ schema on relevant pages; HowTo on process content; Article on guides - Wikipedia/Wikidata alignment work (if brand qualifies for inclusion)

- LinkedIn, Crunchbase, industry-directory consistency

- llms.txt rollout where appropriate

Month 3-6: Citation-pattern building

We move into active authority building because platforms like Perplexity demand credible external references. Publishing content without external verification is a common reason brands fail to appear in AI answers.

- Digital PR campaigns to earn authoritative third-party mentions

- Podcast appearances and conference speaking pitches

- Original research or data publishing for citation-attractive content

- Question-led content rollout aligned to prompt-set gaps

Month 6: First quarterly scorecard

Our first major review documents the tangible progress made during the initial phases. AI visibility requires constant monitoring as model updates roll out.

- Re-test prompt set across all four surfaces

- Document brand-mention growth quarter-on-quarter

- Citation-share trend versus competitors

- Updated gap list for next quarter

Month 9-12: Compounding

We expect to see the real return on investment during this late phase. AI training data cycles are notoriously slow.

- Brand citations in LLMs start appearing for prompts that were invisible at month 0

- Re-tested prompt set shows 30-60 percent improvement in citation rate

- Traffic from AI-aware bots starts appearing in logs (early indicator of AI-search referral)

Measurement challenges

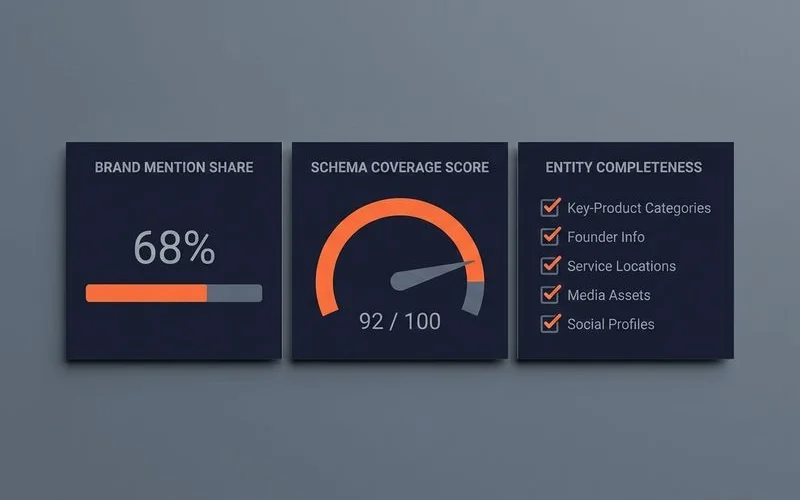

We face a unique reporting environment because the major AI platforms lack standard analytics dashboards. OpenAI and Perplexity do not yet offer complete analytics consoles for content optimisers. This limitation forces us to rely on specialized external measurement methods.

Our team measures aeo deliverables using a combination of manual verification and dedicated third-party software. Tools like Profound and Semrush AIO help benchmark your share of answer across different language models. Until native reporting arrives, AEO measurement relies on these specific tactics:

- Manual prompt-set re-testing (quarterly minimum, monthly for critical brands)

- Brand-mention frequency in LLM outputs against a fixed prompt set

- Citation share-of-voice versus direct competitors

- llms.txt referrer traffic in logs (small now, growing)

- AI Overview impressions in Google Search Console (where AI Overviews trigger for tracked queries)

- Third-party tools (Surfer AEO, Profound, AthenaHQ, Botify AI Visibility)

We document these in a quarterly AEO scorecard for retainer clients.

What “on track” looks like at month 12

We define success through very clear, verifiable metrics after a full year of execution. For a Premium-tier engagement on a brand with reasonable starting position, the transformation is obvious. Our metrics align directly with Perplexity’s massive user base, which exceeded 33 million active users in early 2026. Capturing even a fraction of those high-intent buyers requires meeting these specific benchmarks:

- 30-60 percent of priority prompts now cite the brand (vs ~10 percent at baseline)

- Citation share-of-voice has moved up versus 1-2 direct competitors

- Schema coverage is comprehensive across all key page types

- Wikipedia or Wikidata entry exists if eligible

- Entity attributes are consistent across LinkedIn, Crunchbase, industry directories

- 5+ authoritative third-party mentions earned in the last 6 months

- Traffic from llms.txt-aware bots is non-zero and growing

What “off track” looks like

We sometimes encounter friction points that require immediate strategic pivots. Recognizing these warning signs early is essential for course correction. If you reach month 9-12 and see these indicators, the campaign requires attention:

- Citation rate flat at baseline despite all the work

- Schema validation failures going unaddressed

- No earned third-party mentions in 6 months

The retainer immediately needs a re-audit and a fresh strategy session. We often find that the original prompt set was poorly chosen or too competitive. Sometimes a direct competitor aggressively escalated their own GEO efforts. Our entity work might simply need a completely different semantic angle to trigger citations.

Realistic outlook

We always set the expectation that AEO/GEO is a 12 to 24 month investment. You need this runway to see meaningful citation share-of-voice movement across the major language models. Quick wins certainly exist in this space. Securing FAQ schema can lift your AI Overview citations in just 8 to 12 weeks.

Building lasting brand-citation share against established competitors takes much longer. The underlying training-data cycles for these AI systems are slow and methodical. They require sustained proof before they alter their synthesized recommendations.

Our team applies a full AEO/GEO methodology directly to your brand. The exact details of our services start with a free discovery audit including initial prompt testing. This audit provides immediate clarity on your current AI search standing. For pricing context, please review these comprehensive resources on E-commerce SEO cost and SEO cost in Malaysia. Taking action now ensures your business establishes dominance before the AI search ecosystem becomes fully saturated.

FAQ

How do you measure AEO success?

Brand mentions in LLM outputs across a fixed prompt-set (re-tested quarterly), schema coverage scorecard, citation share for category prompts versus competitors, traffic from AI-aware bots (llms.txt referrers, ChatGPT-User, Perplexity-User), and entity-completeness score. Quarterly snapshot reports document trajectory.

Is there an analytics console for AEO?

Not yet from Google or OpenAI as of mid-2026. We use third-party tools (Surfer AEO, Profound, AthenaHQ) for some signals and manual prompt-set tracking for the rest. Google Search Console shows AI Overview impressions where applicable.

Can you guarantee citations in ChatGPT?

No. Citation rates depend on training-data cycles, retrieval algorithms, and competitive landscape, none of which AI providers expose to optimisers. We can guarantee process (entity work, schema, citation-building, prompt testing) and document trajectory, but not specific citation outcomes.

Related guides

AEO vs Traditional SEO: Do You Need Both?

Where AEO and SEO overlap, where they diverge, and why both matter as zero-click grows. Hybrid roadmap and budget-allocation guidance.

Is Your Brand Visible in ChatGPT and Perplexity?

DIY audit framework to check if AI search engines surface your brand, manual prompt tests, brand-mention checks, common reasons for invisibility.

The Future of Search: Planning for AI-First Discovery

Strategic implications of the AI-search shift, zero-click trajectory, AI Overviews rollouts, decision framework for budget allocation, risk of inaction.

How to Optimize Content for AI Search Engines

Actionable AEO content techniques, question-led structure, entity completeness, schema priorities, citation patterns, semantic clustering, FAQ schema.

Ready to talk about AEO and GEO Services?

Our aeo and geo services retainers are senior-led and tied to revenue. Free discovery audit, no obligation.